Run high-performance production inference on infrastructure built for billion- and trillion-token daily workloads. Get the speed, reliability, and efficiency you need to grow—plus up to $50K in inference credits to get started.

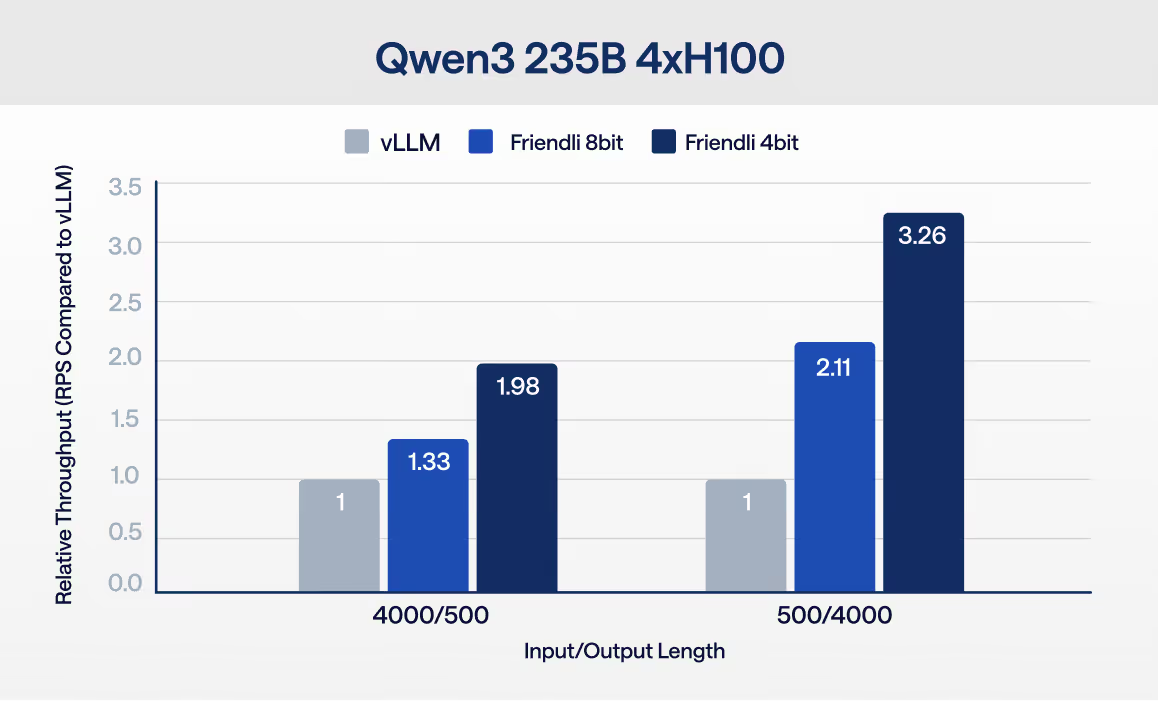

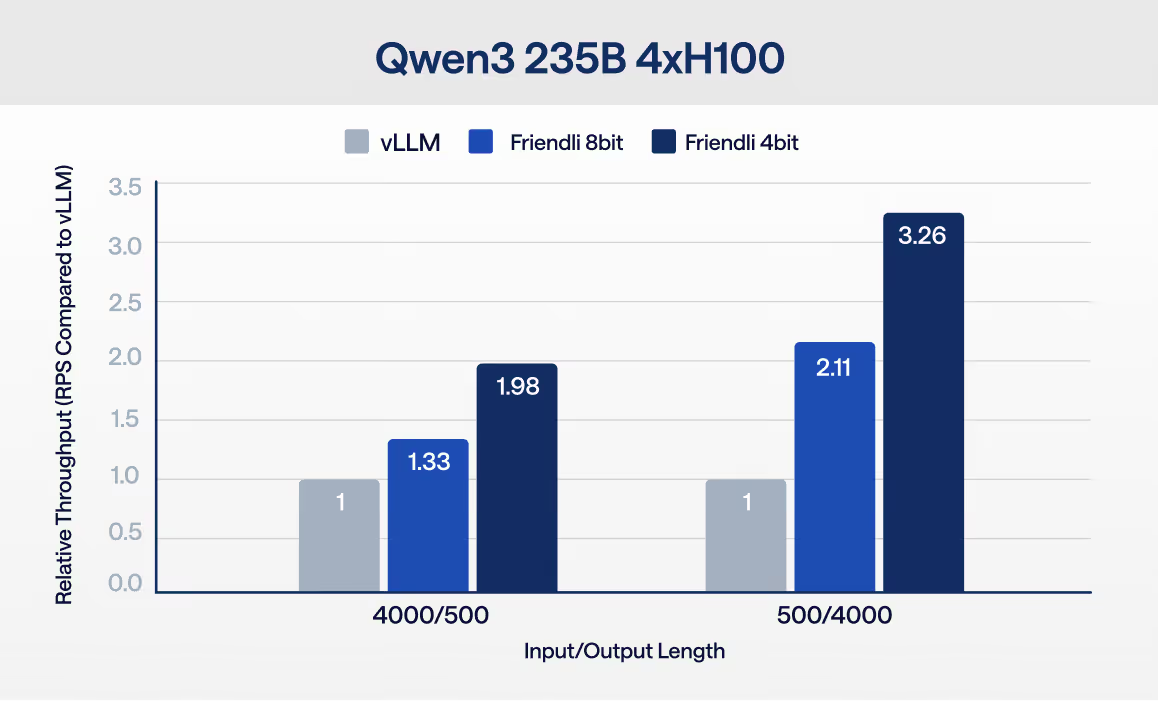

Many teams can get to prototype quickly, but production scale introduces new constraints: latency spikes, throughput ceilings, infrastructure complexity, and rising cost. FriendliAI helps teams scale open-model inference with the performance, reliability, and efficiency needed for real production demand—without forcing major application changes.

.png)

First

Submit the form with your details and current provider bill

Second

We review and approve your credit amount

Third

Start running inference on FriendliAI using your credits